I used to be completely addicted to MOBAs. Every day I would come home from work, stick some mac’n’cheese in the microwave and settle in for a solid 6 hours of ganks, pushes, and base racing. Before I realized it, my evening was over. At the time, I might have thought that I was just having fun. Slowly, though, I began to realise just how much of a negative impact the games, and particularly the other players, were having on my mood and well-being.

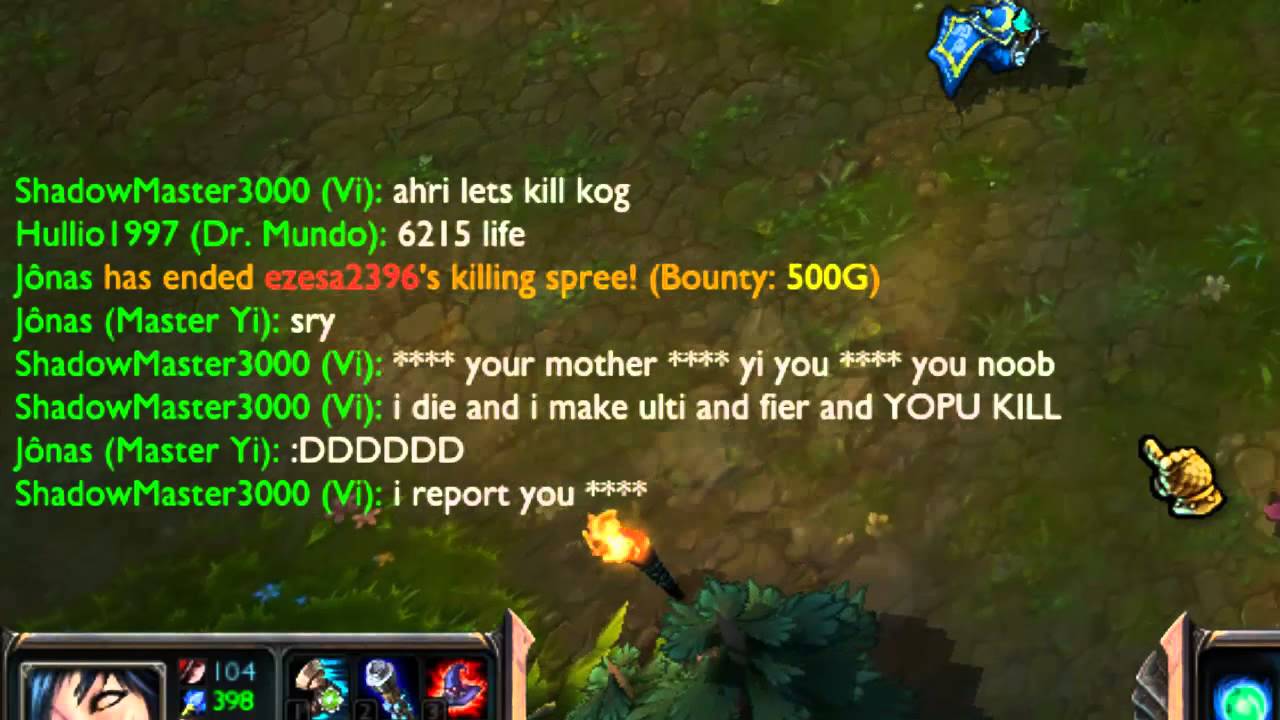

By playing games online we naturally expose ourselves to comments and reactions from other players, but when they become abusive we have an entirely different issue to deal with. I have lost count of the amount of times I was told to “kill myself” by a high-pitched child (we laugh at these stereotypes, but they are very much present!) or called every kind of homophobic, transphobic, or racist slur you can think of.

With mental health issues being disproportionately high for LGBT+ people, people of colour, and women, these kinds of comments can have an effect long after the game is over. We can, of course, mute other players and report their comments, but in most cases, the damage has already been done, and publishers need to recognise the impact of the communications that go on in their games.

Managing the Impact

In DOTA2, receiving enough reports can result in a player being muted for some time, or placed in “low-priority queue”, where they must play a certain number of games in a random game mode with other players who have also been reported. In many cases, this is not effective, and players will continue to rage and flame other players in low-priority, as well as message people outside of the game to remind them to delete the game or kill themselves.

As a whole issue, this of course stems far beyond the computer screen. We exist in a dangerous world where bigoted and prejudiced ideas are becoming more and more common, and the more young people are able to freely voice and express these opinions from the safety of a computer screen, with little to no negative consequences, then the further these oppressive ideas will spread.

E-Sports as an industry is rapidly growing, and whilst I no longer play the games, I still follow tournaments and the professional scene. However, we don’t need to look too far to see examples of this kind of negative behaviour – some streamers and pro players are known for raging or flaming others, such as DotA 2’s ppd, and League of Legends player Dunkey (who has been banned) believing that flaming is an integral part of the game. With these kind of people as ambassadors for the game, is there any wonder their actions are echoed in other players?

Moving forward, can there be a way for publishers to truly combat toxic behaviour in games? Do publishers have a responsibility to ensure that all players are safe and supported? After all, many people play games to briefly escape from the world they live in and enter a new one, but when that world is filled with just as much bigotry and anger as our own, what incentive is there to keep on playing?

Jack Williams

Latest posts by Jack Williams (see all)

- Gaming is a Drag - May 1, 2017

- Mental Health & MOBAs - February 22, 2017